In my previous post, I shared my current vSAN setup details. In this post, we'll take a look at the performance of the original vSAN deployment, and see how much of a performance difference Optane can make when we replace sub-optimal cache disks with Optane for OSA, followed by a full Optane and ESA redeployment.

As previously stated, the cache disks that I have in my current vSAN OSA 2 node cluster are not optimized for caching as they are read intensive disks, and they are mismatched capacity. When running vSAN in a production environment, it is best to adhere to the vSAN HCL as well as the appropriate disks for cache and capacity. While my 3.84TB read intensive drives will be great for capacity, I should be using a write intensive cache disk. Fortunately, the Intel Optane 905's that were sent to me through the vExpert program and Intel should do the trick.

For benchmarking comparisons, I'm using HCIBench 2.8.1. This free utility is provided by VMware as a "Fling". It is an OVA template that, once deployed, allows us to automatically create Linux based VMs that run preconfigured Flexible I/O (FIO) benchmarks. Throughout the benchmarking process, I have selected "Easy Run", which will automatically deploy the number of VMs it feels is correct based on my hardware configuration.

The first thing I did was select the default benchmarks and deploy each on the original vSAN OSA configuration. The "Easy Run" mode determined it would be best to deploy 4 VMs, as I suspect the cache wasn't up to par.

Of note, all OSA benchmarks were run with deduplication and compression disabled.

Here's how it went:

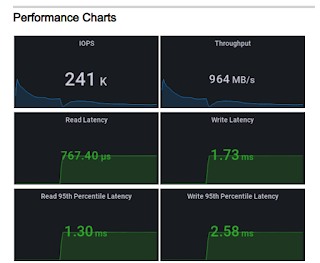

100% read, 4KB random

This is decent, but expected considering all of the disks are read intensive.

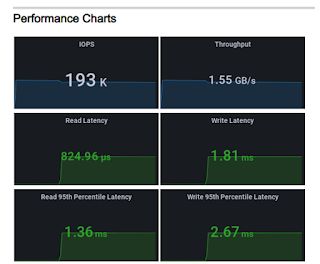

70% read, 4KB random

This is more of a realistic bench, with some write performance metrics. Read latency is pretty high.

50% read/write, 8KB random

This simulates database workloads with an equal mix of reads and writes. With the larger block size, performance improves a bit, but latency remains a concern.

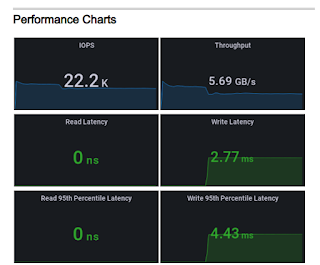

100% write, 256KB sequential

The throughput bench, best for video and media. We come close to saturating the 10Gbe link between the two servers on this one.

For the next tests, I'll remove the 1.2TB and 1.92TB cache disks and replace them with two of the Intel Optane 905's. These are 280GB each, and have drastically better read and write performance capabilities. I could, in theory, use four cache disks, but vSAN prefers a 1:1 cache to capacity disk ratio. This was also the point where I swapped out the 10Gbe 82599 network card and replaced it with a ConnectX-4 CX455 100Gbe card to ensure we don't run into a bandwidth bottleneck (although I doubt I'll be able to saturate 100Gb). We should see a measurable difference across the board in terms of vSAN OSA performance.

Of note, when selecting "Easy Run" on the following tests, HCIBench deployed 8 benchmark VMs.

100% read, 4KB random

Here we can see an increase of 200K IOPS. Despite the read intensive nature of the original disks, the Optane disks are rated higher for reads and substantially higher for writes. I'm not sure why the results failed to capture the 95th percentile read latency, but I would expect it to be similar to the original OSA result

70% read, 4KB random

Unsurprisingly, the write performance improved dramatically, as well as read latency. 188K IOPS at 70/30 for a 2 node cluster is impressive.

50% read/write, 8KB random

Once again, we see the difference in performance thanks to the Optane drives. 172K IOPS and much lower latency.

100% write, 256KB sequential

This was surprising for me. Just by replacing the cache disks, we can see a near 3.5x bandwidth increase. We see that it would've more than saturated the 10Gbe link, so switching to the 100Gbe card gave us a better idea of what to expect. Average write latency also improved considerably!

Overall, we can see a clear advantage in using the Intel Optane disks. It is critical to choose a cache disk that excels in write intensive workloads, as "hot tier" data gets pushed to capacity over time. By contrast, ESA excels with uniform, mixed use disks. We've seen that Optane has impressive write capabilities, but it also has great read performance. Optane can do it all, but what will the HCIBench numbers reflect? Stay tuned for my next blog post, where we'll compare vSAN OSA with 4 Optane cache/4 capacity disks to the ESA with 10 Optane disks test.