In my previous post, I compared what a huge difference having proper caching drives can make with vSAN OSA, replacing my read intensive disks with Intel Optane. One question remains, however: since Optane are high performance, mixed use drives, how would they get along with a vSAN ESA deployment? To find out, I reconfigured my vSAN cluster by deleting the witness VM, deploying a vSAN ESA witness, and loaded each server with 5 Intel Optane disks each. Let's see how it got along.

Caveats:

- ESA has compression only mode on automatically, and cannot be disabled (OSA was tested without dedupe or compression enabled)

- ESA best practices call for a minimum of three nodes. It can absolutely work with two nodes but would see true performance benefits in a right sized cluster

100% read, 4K random

Interesting result, as the vSAN OSA with 2x Optane for caching and 2 read intensive capacity disks actually managed 24K higher IOPS on this test. There are a few likely reasons that I think this would happen:

- The benchmark likely kept everything in the hot tier throughout the benchmark

- As mentioned previously, ESA is running compression while OSA is not. I plan on re-running this benchmark specifically with compression only enabled on the OSA configuration.

- ESA works best at the recommended configuration (3+ nodes).

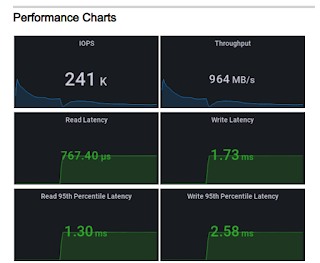

70% read, 4K random

Where Optane improved write performance in the OSA model, ESA with 5 Optane disks per node improved further. We see an improvement of 53K IOPS, as well as higher throughput, and generally better latency across the board.

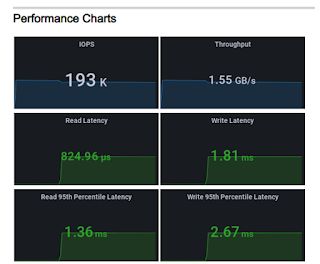

50% read/write, 8K random

We get a decent bump in performance in comparison to the OSA build. Read latency remains low, write latency remains about the same.

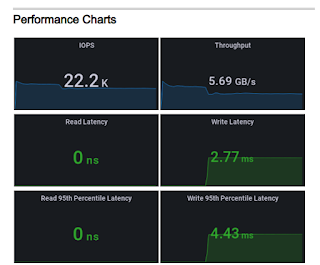

100% write, 256KB sequential

This is perhaps the biggest difference between all the tests, and highlights the key advantage of vSAN ESA especially for a 2 node cluster. Where some of the other tests showed percentage bumps in performance, ESA with write intensive disks managed to get over twice the throughput of vSAN OSA. 5.69GB/s represents 45.52 Gb/s over the network cards. Latency also improved dramatically.

Overall, the 2 node vSAN ESA with 5 Intel Optane disk per server configuration performs generally as expected, comparatively outperforming the OSA with capacity disks. While OSA went blow for blow with ESA, it should be reiterated that deduplication and compression were disabled; IOPS performance tends to be lower in favor of capacity. One thing that I've omitted from these is the usable capacity, of which I would defer to the vSAN calculators to get final numbers, but keep in mind: vSAN OSA accomplished it's numbers with a datastore size of ~13TB, whereas the 280GB Intel Optane disks granted a capacity just north of 2TB.

So what can we take away from this? Is OSA dead? Not by a long shot. In a real world, 2-node ROBO scenario, several questions should be asked:

- What is the workload?

- How much performance do you need?

- Are mixed use drives going to be able to meet capacity demands?

- What's the best bang for the buck hardware to meet workload requirements?

In a workload that favors capacity and read intensive workloads, I would favor vSAN OSA. For performance, ESA should absolutely be a consideration. And if you have the chance to get more nodes, the capacity and performance improvements tend to favor ESA.

No comments:

Post a Comment